Customising Django Microsoft Entra ID Authentication

I recently had need to add Microsoft Azure / Entra ID authentication to my Workload and Assessment Modelling (WAM) app, which is written in the rather excellent Django Python framework. After a little bit of desk research it seemed clear that django-auth-adfs looked like a suitable package to enable this, and it was pretty quick […]

It's time to admit it. Mailman3 is a hot mess.

Like many people, I used mailman2 for mailing lists extensively for a long time. mailman2 was written in Perl, which for those of you who know me, is definitely not my favourite programming language, but, it pretty much worked. It wasn't beautiful in its web UI, but it worked. Quite some time ago, as mailman […]

What links an Ecowitt Weather Station, Cumulus MX, and Home Assistant? MQTT

This is absolutely the type of blog post that is written only for those that come behind trying to solve the same problem, and searching the web for help. Or to remind me how I did it. If you don't understand the title, you can safely ignore this one. The Problem I have an Ecowitt […]

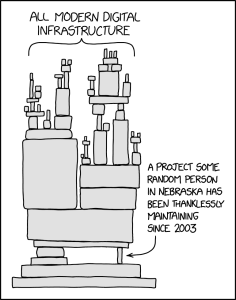

Software Security, Open Source, and the xz affair

Recently, the Free and Open Source (FOSS) community, and especially the Linux ecosystem part of it, has been shocked by a malicious backdoor being inserted in the xz compression library, apparently with a goal to compromise SSH (Secure Shell) connections. You can read about the details in articles from the Register, Ars Technica, and a […]

Extracting Sky Router Crash Data Amidst Kernel Panics

I have a Sky broadband connection with fibre into a Sky Router / Sky Hub. I have noticed very short outages of internet service with increasing frequency recently. The outages are short, maybe around 3-5 minutes long but these are annoying enough in the middle of an online meeting or some other synchronous activity. Sometimes […]

exim4 upgrades and configuration fragility

Last night I decided I'd catch up on sysadmin tasks. Some of that was trying to tighten up my spam filtering again. I had got in place a per-user Bayesian filter on spamassassin, which essentially should allow it to learn a much more individual pattern of what each user considers spam. I also had configuration […]

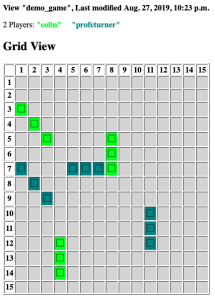

Battleships Server / Client for Education

I've been teaching a first year introductory module in Python programming for Engineering at Ulster University for a few years now. As part of the later labs I have let the students build a battleships game using Object Oriented Programming - with "Fleet" objects containing a list of "Ships" and so on where they could […]

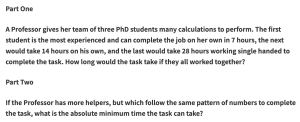

Anatomy of a Puzzle

Recently I was asked to provide a Puzzle For Today for the BBC Radio 4 Today programme which was partially coming as an Outside Broadcast from Ulster University. I've written a post about the puzzle itself, and some of the ramifications of it; this post is really more about the thought process that went into […]

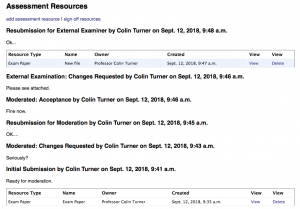

Assessment handling and Assessment Workflow in WAM

Sometime ago I began writing a Workload Allocation Modeller aimed at Higher Education, and I've written some previous blog articles about this. As is often the way, the scope of the project broadened and I found myself writing in support for handling assessments and the QA processes around them. At some point this necessitates a […]

Migrating Django Migrations to Django 2.x

Django is a Python framework for making web applications, and its impressive in its completeness, flexibility and power for speedy prototyping. It's also an impressive project for forward planning, it has a kind of built in "lint" functionality that warns about deprecated code that will be disallowed in future versions. As a result when Django […]