Customising Django Microsoft Entra ID Authentication

I recently had need to add Microsoft Azure / Entra ID authentication to my Workload and Assessment Modelling (WAM) app, which is written in the rather excellent Django Python framework. After a little bit of desk research it seemed clear that django-auth-adfs looked like a suitable package to enable this, and it was pretty quick […]

It's time to admit it. Mailman3 is a hot mess.

Like many people, I used mailman2 for mailing lists extensively for a long time. mailman2 was written in Perl, which for those of you who know me, is definitely not my favourite programming language, but, it pretty much worked. It wasn't beautiful in its web UI, but it worked. Quite some time ago, as mailman […]

What links an Ecowitt Weather Station, Cumulus MX, and Home Assistant? MQTT

This is absolutely the type of blog post that is written only for those that come behind trying to solve the same problem, and searching the web for help. Or to remind me how I did it. If you don't understand the title, you can safely ignore this one. The Problem I have an Ecowitt […]

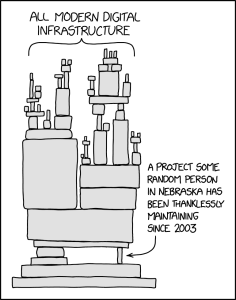

Software Security, Open Source, and the xz affair

Recently, the Free and Open Source (FOSS) community, and especially the Linux ecosystem part of it, has been shocked by a malicious backdoor being inserted in the xz compression library, apparently with a goal to compromise SSH (Secure Shell) connections. You can read about the details in articles from the Register, Ars Technica, and a […]

Extracting Sky Router Crash Data Amidst Kernel Panics

I have a Sky broadband connection with fibre into a Sky Router / Sky Hub. I have noticed very short outages of internet service with increasing frequency recently. The outages are short, maybe around 3-5 minutes long but these are annoying enough in the middle of an online meeting or some other synchronous activity. Sometimes […]

exim4 upgrades and configuration fragility

Last night I decided I'd catch up on sysadmin tasks. Some of that was trying to tighten up my spam filtering again. I had got in place a per-user Bayesian filter on spamassassin, which essentially should allow it to learn a much more individual pattern of what each user considers spam. I also had configuration […]

Semi Open Book Exams

A few years ago, I switched one of my first year courses to use what I call a semi-open-book approach. Open-book exams of course allow students to bring whatever materials they wish into them, but they have the disadvantage that students will often bring in materials that they have not studied in detail, or even […]

Pretty Printing C++ Archives from Emails

I'm just putting this here because I nearly managed to lose it. This is a part of a pretty unvarnished BASH script for a very specific purpose, taking an email file containing a ZIP of submitted C++ code from students. This script produces pretty printed PDFs of the source files named after each author to […]

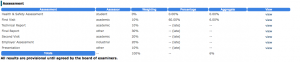

OPUS and Assessment 2 - Adding Custom Assessments

This is a follow on to the previous article on setting up assessment in OPUS, an on-line system for placement learning. You probably want to read that first. This is much more advanced and requires some technical knowledge (or someone that has that). Making New Assessments Suppose that OPUS doesn't have the assessment you want, […]

OPUS and Assessment 1 - The Basics

OPUS is a FOSS (Free and Open Source Software) web application I wrote at Ulster University to manage work based learning. It has been, and is used by some other universities too. Among its features is a way to understand the assessment structure for different groups and how it can change over years in such […]