What links an Ecowitt Weather Station, Cumulus MX, and Home Assistant? MQTT

This is absolutely the type of blog post that is written only for those that come behind trying to solve the same problem, and searching the web for help. Or to remind me how I did it. If you don't understand the title, you can safely ignore this one. The Problem I have an Ecowitt […]

What FidoNet can teach Green Computing

For a couple of decades, especially maybe from 2000-2020, the area of Computing largely ignored any issues of environmental sustainability impact. It's an industry joke that increasing layers of abstraction have rendered the astonishing advances in computing hardware in processing and memory to something of a standstill. And yet, it is predicted that digital emissions […]

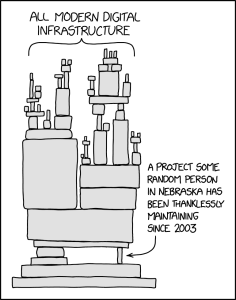

Software Security, Open Source, and the xz affair

Recently, the Free and Open Source (FOSS) community, and especially the Linux ecosystem part of it, has been shocked by a malicious backdoor being inserted in the xz compression library, apparently with a goal to compromise SSH (Secure Shell) connections. You can read about the details in articles from the Register, Ars Technica, and a […]

My Spaghetti Bolognese

Like one of my earlier recipes, this is an altered version of a recipe my mum used to use, and it's a firm favourite with my daughters. The main reason for my recording this recipe is for them. This is rather more "meaty" than most Bolognese sauces. This recipe will serve four - at least […]

Dune, Survivor Bias and the National Student Survey

At the time of writing Dune Part Two has just been released in cinemas. The film is of course based on the 1965 book Dune, which has many fascinating themes. One of these is around what makes the Sardaukar - the troops of the Padishah Emperor the second most élite soldiers of the known universe, […]

Performative Data Security is Bad Data Security

Most of us have been there. In these days of GDPR and scams, when you call large companies you will usually be told you need to answer some questions to prove who you are before they will discuss your account or case. This makes perfect sense. Most large companies, on the rare occaision they have […]

Extracting Sky Router Crash Data Amidst Kernel Panics

I have a Sky broadband connection with fibre into a Sky Router / Sky Hub. I have noticed very short outages of internet service with increasing frequency recently. The outages are short, maybe around 3-5 minutes long but these are annoying enough in the middle of an online meeting or some other synchronous activity. Sometimes […]

exim4 upgrades and configuration fragility

Last night I decided I'd catch up on sysadmin tasks. Some of that was trying to tighten up my spam filtering again. I had got in place a per-user Bayesian filter on spamassassin, which essentially should allow it to learn a much more individual pattern of what each user considers spam. I also had configuration […]

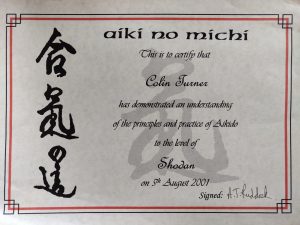

20 years since shodan - reflections on gradings, mastery and imposter syndrome

Today (5th August 2021) marks twenty years since I first graded to shodan (the first black belt grade) in a martial art. It might come as a surprise to many non martial artists that there are multiple black belt grades, and that a black belt does not represent the end of a journey but more […]

Beef in Soy Sauce

This is a version of a recipe my Mum had that I have experimented with in for a slow cooker format. I've pared this down to the most simple version I can. I usually run this recipe by eye, but one of my daughters wants to have a go at it, so I decided I'd […]